Great news, the new issue of SIGEvolution was released, click in the cover above to go to the SIGEvolution site; this issue has an interview is with Hans-Paul Schwefel.

Last night I’ve read a post on Reddit written by Matthew Rollings showing a code in Python to solve Eight Queens puzzle using EA. So I decided to implement it in Python again but this time using Pyevolve, here is the code:

from pyevolve import *

from random import shuffle

BOARD_SIZE = 64

def queens_eval(genome):

collisions = 0

for i in xrange(0, BOARD_SIZE):

if i not in genome: return 0

for i in xrange(0, BOARD_SIZE):

col = False

for j in xrange(0, BOARD_SIZE):

if (i != j) and (abs(i-j) == abs(genome[j]-genome[i])):

col = True

if col == True: collisions +=1

return BOARD_SIZE-collisions

def queens_init(genome, **args):

genome.genomeList = range(0, BOARD_SIZE)

shuffle(genome.genomeList)

def run_main():

genome = G1DList.G1DList(BOARD_SIZE)

genome.setParams(bestrawscore=BOARD_SIZE, rounddecimal=2)

genome.initializator.set(queens_init)

genome.mutator.set(Mutators.G1DListMutatorSwap)

genome.crossover.set(Crossovers.G1DListCrossoverCutCrossfill)

genome.evaluator.set(queens_eval)

ga = GSimpleGA.GSimpleGA(genome)

ga.terminationCriteria.set(GSimpleGA.RawScoreCriteria)

ga.setMinimax(Consts.minimaxType["maximize"])

ga.setPopulationSize(100)

ga.setGenerations(5000)

ga.setMutationRate(0.02)

ga.setCrossoverRate(1.0)

# This DBAdapter is to create graphs later, it'll store statistics in

# a SQLite db file

sqlite_adapter = DBAdapters.DBSQLite(identify="queens")

ga.setDBAdapter(sqlite_adapter)

ga.evolve(freq_stats=10)

best = ga.bestIndividual()

print best

print "\nBest individual score: %.2f\n" % (best.score,)

if __name__ == "__main__":

run_main()

It tooks 49 generations to solve a 64×64 (4.096 chess squares) chessboard, here is the output:

Gen. 0 (0.00%): Max/Min/Avg Fitness(Raw) [20.83(27.00)/13.63(7.00)/17.36(17.36)]

Gen. 10 (0.20%): Max/Min/Avg Fitness(Raw) [55.10(50.00)/39.35(43.00)/45.92(45.92)]

Gen. 20 (0.40%): Max/Min/Avg Fitness(Raw) [52.51(55.00)/28.37(24.00)/43.76(43.76)]

Gen. 30 (0.60%): Max/Min/Avg Fitness(Raw) [67.45(62.00)/51.92(54.00)/56.21(56.21)]

Gen. 40 (0.80%): Max/Min/Avg Fitness(Raw) [65.50(62.00)/19.89(31.00)/54.58(54.58)]

Evolution stopped by Termination Criteria function !

Gen. 49 (0.98%): Max/Min/Avg Fitness(Raw) [69.67(64.00)/54.03(56.00)/58.06(58.06)]

Total time elapsed: 39.141 seconds.

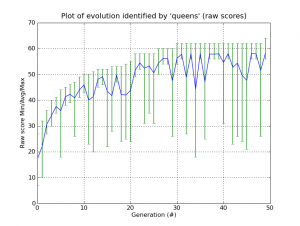

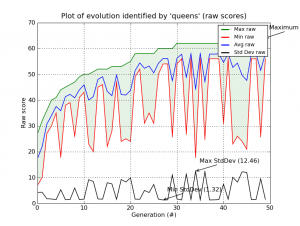

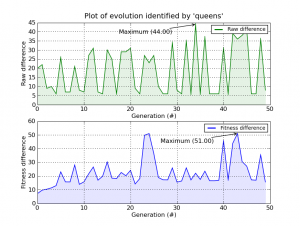

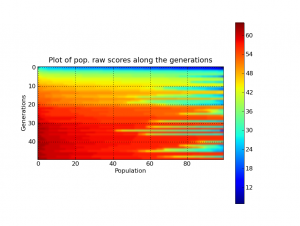

And here is the plots generated by the Graph Plot Tool of Pyevolve:

UPDATE 19/09: it seems that some people had misunderstood the post title, so here is a clarification: I’m not comparing Word with OpenOffice or something like that, the title refers to the design choices of OpenOffice in using Python 2.6 as an option for scripting language, it’s a humorous title and should not be considered in literal sense.

This is a very simple, but powerful, Python script to call Google Sets API (in fact it’s not an API call – since Google doesn’t have an official API for Sets service – but an interesting and well done scraper using BeautifulSoup) inside the OpenOffice 3.1.1 Writer… anyway, you can check the video to understand what it really does:

And here is the very complex source-code:

from xgoogle.googlesets import GoogleSets

def growMyLines():

""" Calls Google Set unofficial API (xgoogle) """

doc = XSCRIPTCONTEXT.getDocument()

controller = doc.getCurrentController()

selection = controller.getSelection()

count = selection.getCount();

text_range = selection.getByIndex(0);

lines_list = text_range.getString().split("\n");

gset = GoogleSets(lines_list)

gset_results = gset.get_results()

results_concat = "\n".join(gset_results)

text_range.setString(results_concat);

g_exportedScripts = growMyLines,

You need to put the “xgoogle” module inside the “OpenOffice.org 3\Basis\program\python-core-2.6.1\lib” path, and the above script inside “OpenOffice.org 3\Basis\share\Scripts\python”.

I hope you enjoyed =) with new Python 2.6 core in OpenOffice 3, they have increased the productivity potential at the limit.

Hello, this is a bridge between Subversion (svn) and Twitter, the intent of this tool is to update a Twitter account when new commit messages arrives in a Subversion repository. We almost never have access to svn repository to add a post-commit hook in a way to call our script and send updates to twitter, so this tool was done to overcome that situation. Using it, you can monitor for example a svn repository from Google Hosting, from Sourceforge.net, etc…

The process of the tool is simple: it will firstly check Twitter account for the last svn commit message (the messages always start with a “$” prefix, or with another user-defined prefix), and then it will check the svn repository server to verify if it has new commits compared to the last tweet revision, if it has, it will update twitter with newer commit messages.

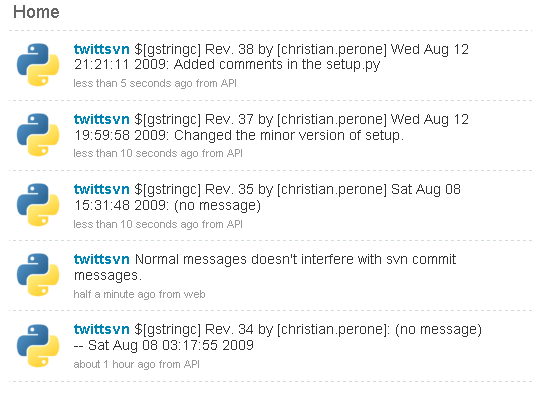

Here is a simple example of a Twitter account updated with commit messages:

The tool is very simple to use in command-line:

Python twittsvn v.0.1

By Christian S. Perone

https://blog.christianperone.com

Usage: twittsvn.py [options]

Options:

-h, --help show this help message and exit

Twitter Options:

Twitter Accounting Options

-u TWITTER_USERNAME, --username=TWITTER_USERNAME

Twitter username (required).

-p TWITTER_PASSWORD, --password=TWITTER_PASSWORD

Twitter password (required).

-r TWITTER_REPONAME, --reponame=TWITTER_REPONAME

Repository name (required).

-c TWITTER_COUNT, --twittercount=TWITTER_COUNT

How many tweets to fetch from Twitter, default is

'30'.

Subversion Options:

Subversion Options

-s SVN_PATH, --spath=SVN_PATH

Subversion path, default is '.'.

-n SVN_NUMLOG, --numlog=SVN_NUMLOG

Number of SVN logs to get, default is '5'.

And here is a simple example:

# python twittsvn.py -u twitter_username -p twitter_password \ -r any_repository_name

You must execute it in a repository directory (not the server, your local files) or use the “-s” option to specify the path of your local repository. You should put this script to execute periodicaly using cron or something like that.

To use the tool, you must install pysvn and python-twitter. To install Python-twitter you can use “easy_install python-twitter”, but for pysvn you must check the download section at the project site.

Here is the source-code of the twittsvn.py:

# python-twittsvn - A SVN/Twitter bridge

# Copyright (C) 2009 Christian S. Perone

#

# This program is free software: you can redistribute it and/or modify

# it under the terms of the GNU General Public License as published by

# the Free Software Foundation, either version 3 of the License, or

# (at your option) any later version.

#

# This program is distributed in the hope that it will be useful,

# but WITHOUT ANY WARRANTY; without even the implied warranty of

# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

# GNU General Public License for more details.

#

# You should have received a copy of the GNU General Public License

# along with this program. If not, see .

import sys

import re

import time

from optparse import OptionParser, OptionGroup

__version__ = "0.1"

__author__ = "Christian S. Perone "

TWITTER_PREFIX = "$"

TWITTER_MSG = TWITTER_PREFIX + "[%s] Rev. %d by [%s] %s: %s"

try:

import pysvn

except ImportError:

raise ImportError, "pysvn not found, check http://pysvn.tigris.org/project_downloads.html"

try:

import twitter

except ImportError:

raise ImportError, "python-twitter not found, check http://code.google.com/p/python-twitter"

def ssl_server_trust_prompt(trust_dict):

return True, 5, True

def run_main():

parser = OptionParser()

print "Python twittsvn v.%s\nBy %s" % (__version__, __author__)

print "https://blog.christianperone.com\n"

group_twitter = OptionGroup(parser, "Twitter Options",

"Twitter Accounting Options")

group_twitter.add_option("-u", "--username", dest="twitter_username",

help="Twitter username (required).",

type="string")

group_twitter.add_option("-p", "--password", dest="twitter_password",

help="Twitter password (required).",

type="string")

group_twitter.add_option("-r", "--reponame", dest="twitter_reponame",

help="Repository name (required).",

type="string")

group_twitter.add_option("-c", "--twittercount", dest="twitter_count",

help="How many tweets to fetch from Twitter, default is '30'.",

type="int", default=30)

parser.add_option_group(group_twitter)

group_svn = OptionGroup(parser, "Subversion Options",

"Subversion Options")

group_svn.add_option("-s", "--spath", dest="svn_path",

help="Subversion path, default is '.'.",

default=".", type="string")

group_svn.add_option("-n", "--numlog", dest="svn_numlog",

help="Number of SVN logs to get, default is '5'.",

default=5, type="int")

parser.add_option_group(group_svn)

(options, args) = parser.parse_args()

if options.twitter_username is None:

parser.print_help()

print "\nError: you must specify a Twitter username !"

return

if options.twitter_password is None:

parser.print_help()

print "\nError: you must specify a Twitter password !"

return

if options.twitter_reponame is None:

parser.print_help()

print "\nError: you must specify any repository name !"

return

twitter_api = twitter.Api(username=options.twitter_username,

password=options.twitter_password)

twitter_api.SetCache(None) # Dammit cache !

svn_api = pysvn.Client()

svn_api.callback_ssl_server_trust_prompt = ssl_server_trust_prompt

print "Checking Twitter synchronization..."

status_list = twitter_api.GetUserTimeline(options.twitter_username,

count=options.twitter_count)

print "Got %d statuses to check..." % len(status_list)

last_twitter_commit = None

for status in status_list:

if status.text.startswith(TWITTER_PREFIX):

print "SVN Commit messages found !"

last_twitter_commit = status

break

print "Checking SVN logs for ['%s']..." % options.svn_path

log_list = svn_api.log(options.svn_path, limit=options.svn_numlog)

if last_twitter_commit is None:

print "No twitter SVN commit messages found, posting last %d svn commit messages..." % options.svn_numlog

log_list.reverse()

for log in log_list:

message = log["message"].strip()

date = time.ctime(log["date"])

if len(message) <= 0: message = "(no message)"

twitter_api.PostUpdate(TWITTER_MSG % (options.twitter_reponame,

log["revision"].number,

log["author"], date, message))

print "Posted %d svn commit messages to twitter !" % len(log_list)

else:

print "SVN commit messages found in twitter, checking last revision message...."

msg_regex = re.compile(r'Rev\. (\d+) by')

last_rev_twitter = int(msg_regex.findall(last_twitter_commit.text)[0])

print "Last revision detected in twitter is #%d, checking for new svn commit messages..." % last_rev_twitter

rev_num = pysvn.Revision(pysvn.opt_revision_kind.number, last_rev_twitter+1)

try:

log_list = svn_api.log(options.svn_path, revision_end=rev_num,

limit=options.svn_numlog)

except pysvn.ClientError:

print "No more revisions found !"

log_list = []

if len(log_list) <= 0:

print "No new SVN commit messages found !"

print "Updated !"

return

log_list.reverse()

print "Posting new messages to twitter..."

posted_new = 0

for log in log_list:

message = log["message"].strip()

date = time.ctime(log["date"])

if len(message) <= 0:

message = "(no message)"

if log["revision"].number > last_rev_twitter:

twitter_api.PostUpdate(TWITTER_MSG % (options.twitter_reponame,

log["revision"].number,

log["author"], date, message ))

posted_new+=1

print "Posted new %d messages to twitter !" % posted_new

print "Updated!"

if __name__ == "__main__":

run_main()

Benford’s law is one of those very weird things that we can’t explain, and when we discover more and more phenomena that obey the law, we became astonished. Two people (Simon Newcomb – 1881 and Frank Benford – 1938) noted the law in the same way, while flipping pages of a logarithmic table book; they noticed that the pages at the beginning of the book were dirtier than the pages at the end.

Currently, there are no a priori criteria that say to us when a dataset will or will not obey the Benford’s Law. And it is because of this, that I’ve done an analysis on the Twitter Public Timeline.

The Twitter API to get Public Timeline is simply useless for this analysis because in the API Docs, they say that the Public Timeline is cached for 60 seconds ! 60 seconds is an eternity, and there is a request rating of 150 request/hour. So, it doesn’t help, buuuuuut, there is an alpha testing API with pretty and very useful streams of data from the Public Timeline; there are many methods in the Twitter Streaming API, and the most interesting one is the “Firehose”, which returns ALL the public statuses, but this method is only available for intere$ting people, and I’m not one of them. Buuuut, we have “Spritzer”, which returns a portion of all public statuses, since it’s only what we have available in the moment, it MUST be useful, and it’s a pretty stream of data =)

So, I’ve got the Spritzer real-time stream of data and processed each new status which arrived with a regex to find all the numbers in the status; if the status was “I have 5 dogs and 3 cats”, the numbers collected should be “[5, 3]”. All those accumulated numbers were then checked against the Benford’s Law. I’ve used Matplotlib to plot the two curves (the Benford’s Law and the Twitter statuses digits distribution) to empirically observe the correlation between them. You can note in the upper right corner of the video, the Pearson’s correlation between the two distributions too.

Here is the video (I’ve seen only after creating of the video, but the color of the curves are the inverse as seen in the legend):

The video represents the 15 minutes (3.160 captured statuses) of the Twitter Public Timeline. At the end of the video, you can see a Pearson’s correlation of 0.95. It seems that we have found another Benford’s son =)

The little tool to handle the Twitter Spritzer stream of data and plot the correlation graph in real-time was entirely written in Python, I’ll do a clean-up and post it here soon I got time. The tool has generated 1823 png images that were merged using ffmpeg.

I hope you enjoyed =)

UPDATE 11/08: the user “poobare” has cited an interesting paper about Benford’s Law on Reddit, here is the link.

More posts about Benford’s Law

Prime Numbers and the Benford’s Law

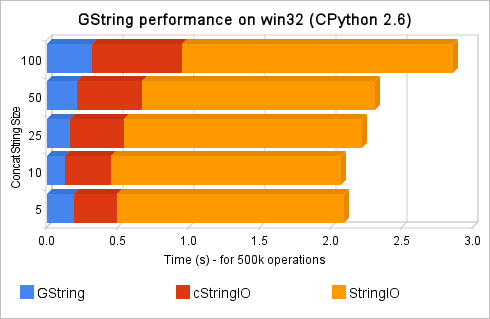

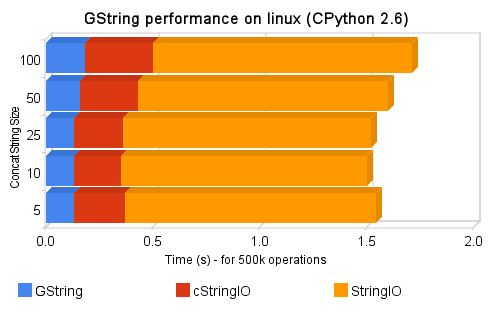

Last week I’ve done the ctype wrapper of Glib GString, but the performance issues when compared with cStringIO or StringIO was very poor, due to overhead of the ctypes calls. So I’ve written a new C extension for Python, the source-code (for linux) and install packages (for win32) are available at the project site. Here is some performance comparisons:

Those tests were done to test string concatenation (append) of the three modules. You can find more about the tests and performance in the project site.

I hope you enjoy =)

This post is to announce the Pyevolve user group I’ve created, since some users are requesting it and I think it’s important to exchange experiences and help users, here is the group (it’s hosted on google groups).

Cya !

I’ve done a little wrapper of GLib/GString functionality using Python ctypes. GString of GLib is an amazing and very stable work done by GLib team, the core used by GTK+ and many other libraries. The wrapper I’ve done works in Windows and Linux, but the performance results seems more interesting for Windows, however, the GString functionality is very interesting for any platform. The project is hosted here in Google project hosting.

Here is some examples of the wrapper:

>>> from GString import GString

>>> obj1 = GString("test")

>>> obj1

>>> print obj1

test

>>> obj1+obj1

>>> obj1+=obj1

>>> obj1

>>> obj1.truncate(5)

>>> obj1.insert(2, "xx")

>>> obj1.assign("test")

>>> obj1.erase(2,2)

>>> obj1.assign("12345")

>>> for i in xrange(len(obj1)):

... print obj1[i]

...

1

2

3

4

5

I hope you enjoyed the wrapper, you can find performance comparisons in the project home site.