The same old historicism, now on AI

* This is a critical article regarding the presence of historicism in modern AI predictions for the future.

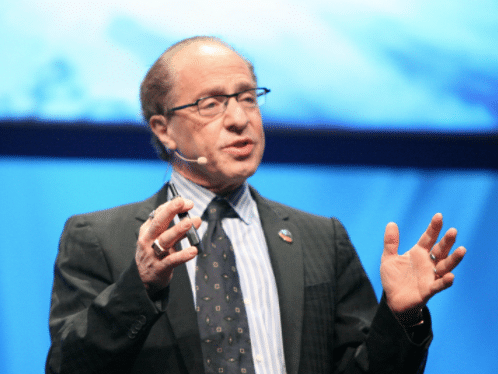

Perhaps you already read about the Technological Singularity, since it is one of the hottest predictions for the future (there is even a university with that name), especially after the past years’ development of AI, more precisely, after recent Deep Learning advancements that attracted a lot of attention (and bad journalism too). In his The Singularity is near (2005) book, Ray Kurzweil predicts that humans will transcend the “limitations of our biological bodies and brain”, stating also that “future machines will be human, even if they are not biological”. In other books, like The Age of Intelligent Machines (1990), he also predicts a new world government, computers passing Turing tests, exponential laws everywhere, and so on (not that hard to have a good recall rate with that amount of predictions right ?).

As science fiction, these predictions are pretty amazing, and many of them were very close to what happened in our “modern days” (and I also really love the works made by Arthur C. Clarke), however, there are a lot of people that are putting science clothes on what is called “futurism”, sometimes also called “future studies” or “futurology”, although as you can imagine, the last term is usually avoided due to some obvious reasons (sounds like astrology, and you don’t want to be linked to pseudo-science right ?).

In this post, I would like to talk not about the predictions. Personally, I think that these points of view are really relevant to our future, just like the serious research on ethics and moral in AI, but I would like to criticize a very particular aspect of the status of how these ideas are being diffused, and I like to make the point here very clear: I’m NOT criticizing the predictions themselves, NEITHER the importance of these predictions and different views of the future, but the status of these ideas, because it seems that there is a major comeback of a kind of historicism in this particular field that I would like to discuss.

There is a very subtle line where it is very easy to transition from a personal prediction of historical events to a view where you pretend that these predictions have a scientific status. Some harsh critics were made in the past regarding the Technological Singularity, such as this one from Steven Pinker (2008):

(…) There is not the slightest reason to believe in a coming singularity. The fact that you can visualize a future in your imagination is not evidence that it is likely or even possible. Look at domed cities, jet-pack commuting, underwater cities, mile-high buildings, and nuclear-powered automobiles—all staples of futuristic fantasies when I was a child that have never arrived. Sheer processing power is not a pixie dust that magically solves all your problems. (…) –

– Steven Pinker, 2008

Steven Pinker is criticizing here an important aspect, that is obvious but many people usually do not understand the implication of this: the fact that you can imagine something isn’t a reason or evidence that this is possible. Just like the ontological argument was criticized in the past by Immanuel Kant, where we have the same kind of transition.

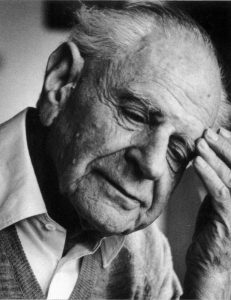

However, what I would like to criticize here is the fact that a lot of futurists are postulating these predictions as if they have a scientific status, which is a gross misunderstanding of the scientific method that led to the development of the social historicism in the past, and that was hardly criticized by the philosopher Karl Popper in many different important works such as The Open Society and Its Enemies (1945) and on The Poverty of Historicism (1936) in the political context.

Historicism, as Popper describes, is characterized by the belief that once you have discovered the developmental laws (like the futurist exponential laws) of history (or AI development), that would enable us to prophesy the destiny of man with scientific status. Karl Popper found that the dangerous habit of historical prophecy, so widespread among our intellectual leaders, has various functions:

“It is always flattering to belong to the inner circle of the initiated, and to possess the unusual power of predicting the course of history. Besides, there is a tradition that intellectual leaders are gifted with such powers, and not to possess them may lead to the loss of caste. The danger, on the other hand, of their being unmasked as charlatans is very small, since they can always point out that it is certainly permissible to make less sweeping predictions; and the boundaries between these and augury are fluid.”

– Karl Popper, 1945

Recently, we were also able to witness the debate between Elon Musk and Mark Zuckerberg, where you’ll find all sort of criticism between each other, but little or no humility regarding the limits of these claims. Karl Popper mentions an important fact to consider in his The Open Society and Its Enemies book on the social context, that can also be certainly applied here as you’ll note:

(…) Such arguments may sound plausible enough. But plausibility is not a reliable guide in such matters. In fact, one should not enter into a discussion of these specious arguments before having considered the following question of method: Is it within the power of any social science to make such sweeping historical prophecies ? Can we expect to get more than the irresponsible reply of the soothsayer if we ask a man what the future has in store for mankind ?

– Karl Popper, 1945

With that said, we should always remember the importance of our future views and predictions, but we should also never forget the status of these predictions, and be always responsible for our diffusion of these claims. They aren’t scientific by any means, and we shouldn’t take them as that, especially when dangerous ideas such as the urge for control are being made based on these personal future prophecies.

I would like to close this post by quoting Karl Popper:

The systematic analysis of historicism aims at something like scientific status. This book does not. Many of the opinions expressed are personal. What it owes to scientific method is largely the awareness of its limitations : it does not offer proofs where nothing can be proved, nor does it pretend to be scientific where it cannot give more than a personal point of view. It does not try to replace the old systems of philosophy by a new system. It does not try to add to all these volumes filled withwisdom, to the metaphysics of history and destiny, such as are fashionable nowadays. It rather tries to show that this prophetic wisdom is harmful, that the metaphysics of history impede the application of the piecemeal methods of science to the problems of social reform. And it further tries to show how we may become the makers of our fate when we have ceased to pose as its prophets.

Would you prefer instead the term “technological development and adoption forecasting”? Would you argue that forecasting is not scientific?

Very well-thought-out analysis. As a recovering futurologist (never cured), I found two notions that can drive one towards historicism. I worked at Gartner for 11 years, and a subsequent stint of three after a seven-year hiatus. Doing five-year predictions for any period over five years leads one towards humility (or denial). Jerome Glenn observed that if one wishes to become good at predicting the future, you need to do two things. First, you need to describe the landscape of facts that form your basis for the “present,” and second you need to explain the chain of reasoning that gets yo from the “present” to the “future.” When you finally arrive at the future, you can either celebrate (if you were right) or you must review those facts and that reasoning to discover what you missed that caused the prediction to not play out as you expected.

By performing this rigorous and thorough self-analysis, over time you either become better at making predictions or you conclude the universe is entirely random over long time horizons and move to another field.

In The Art of The Lon View, Peter Schwartz discussed the notion of “scenaric planning” – describing the possible future constellation of events by modeling opposing polar outcomes in pairs of in orthogonal domains taken from the set (Social, Technological, Environmental, Economic, and Political). These definitions are not absolute, but simply heuristic categories to segregate phenomena into usable groups. I participated in a series of such processes with groups of 40 colleagues in the late 1990s. The results were very useful internally, and led us to institute a new domain for long-range predictions there.

Audits at Gartner revealed my team of five information security researchers in the Information Security Strategies service was batting around .820. I conclude there is something to the notion of predictability of certain technological and business trends. Futurology is not science – the Hempel/Gray controversy of the 1960s showed that. But, as Mark Twain observed, “History doesn’t repeat itself, but it rhymes.”