Presentation about an “Achitectural Zoo” of different applications and architectures of CNNs. Presented at Machine Learning Meetup in Porto Alegre yesterday.

Video (there are english subtitles available):

by Christian S. Perone

Presentation about an “Achitectural Zoo” of different applications and architectures of CNNs. Presented at Machine Learning Meetup in Porto Alegre yesterday.

Video (there are english subtitles available):

Update 17/01: reddit discussion thread.

Update 19/01: hacker news thread.

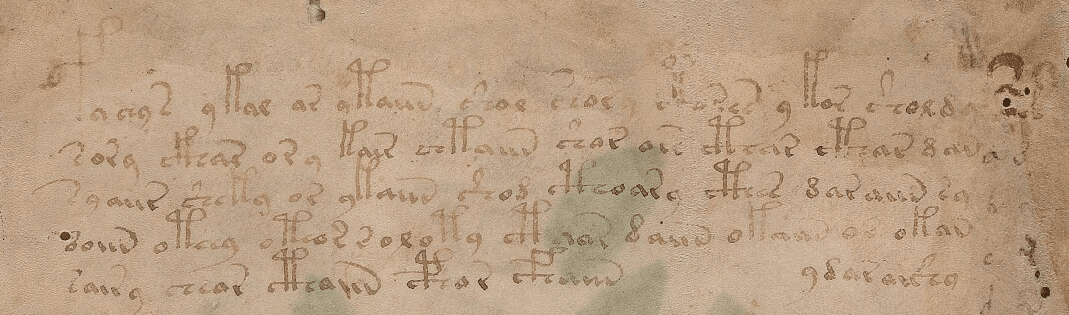

The Voynich Manuscript is a hand-written codex written in an unknown system and carbon-dated to the early 15th century (1404–1438). Although the manuscript has been studied by some famous cryptographers of the World War I and II, nobody has deciphered it yet. The manuscript is known to be written in two different languages (Language A and Language B) and it is also known to be written by a group of people. The manuscript itself is always subject of a lot of different hypothesis, including the one that I like the most which is the “culture extinction” hypothesis, supported in 2014 by Stephen Bax. This hypothesis states that the codex isn’t ciphered, it states that the codex was just written in an unknown language that disappeared due to a culture extinction. In 2014, Stephen Bax proposed a provisional, partial decoding of the manuscript, the video of his presentation is very interesting and I really recommend you to watch if you like this codex. There is also a transcription of the manuscript done thanks to the hard-work of many folks working on it since many moons ago.

The Voynich Manuscript is a hand-written codex written in an unknown system and carbon-dated to the early 15th century (1404–1438). Although the manuscript has been studied by some famous cryptographers of the World War I and II, nobody has deciphered it yet. The manuscript is known to be written in two different languages (Language A and Language B) and it is also known to be written by a group of people. The manuscript itself is always subject of a lot of different hypothesis, including the one that I like the most which is the “culture extinction” hypothesis, supported in 2014 by Stephen Bax. This hypothesis states that the codex isn’t ciphered, it states that the codex was just written in an unknown language that disappeared due to a culture extinction. In 2014, Stephen Bax proposed a provisional, partial decoding of the manuscript, the video of his presentation is very interesting and I really recommend you to watch if you like this codex. There is also a transcription of the manuscript done thanks to the hard-work of many folks working on it since many moons ago.

My idea when I heard about the work of Stephen Bax was to try to capture the patterns of the text using word2vec. Word embeddings are created by using a shallow neural network architecture. It is a unsupervised technique that uses supervided learning tasks to learn the linguistic context of the words. Here is a visualization of this architecture from the TensorFlow site:

These word vectors, after trained, carry with them a lot of semantic meaning. For instance:

We can see that those vectors can be used in vector operations to extract information about the regularities of the captured linguistic semantics. These vectors also approximates same-meaning words together, allowing similarity queries like in the example below:

>>> model.most_similar("man")

[(u'woman', 0.6056041121482849), (u'guy', 0.4935004413127899), (u'boy', 0.48933547735214233), (u'men', 0.4632953703403473), (u'person', 0.45742249488830566), (u'lady', 0.4487500488758087), (u'himself', 0.4288588762283325), (u'girl', 0.4166809320449829), (u'his', 0.3853422999382019), (u'he', 0.38293731212615967)]

>>> model.most_similar("queen")

[(u'princess', 0.519856333732605), (u'latifah', 0.47644317150115967), (u'prince', 0.45914226770401), (u'king', 0.4466976821422577), (u'elizabeth', 0.4134873151779175), (u'antoinette', 0.41033703088760376), (u'marie', 0.4061327874660492), (u'stepmother', 0.4040161967277527), (u'belle', 0.38827288150787354), (u'lovely', 0.38668593764305115)]

Word vectors can also be used (surprise) for translation, and this is the feature of the word vectors that I think that its most important when used to understand text where we know some of the words translations. I pretend to try to use the words found by Stephen Bax in the future to check if it is possible to capture some transformation that could lead to find similar structures with other languages. A nice visualization of this feature is the one below from the paper “Exploiting Similarities among Languages for Machine Translation“:

This visualization was made using gradient descent to optimize a linear transformation between the source and destination language word vectors. As you can see, the structure in Spanish is really close to the structure in English.

To train this model, I had to parse and extract the transcription from the EVA (European Voynich Alphabet) to be able to feed the Voynich sentences into the word2vec model. This EVA transcription has the following format:

<f1r.P1.1;H> fachys.ykal.ar.ataiin.shol.shory.cth!res.y.kor.sholdy!- <f1r.P1.1;C> fachys.ykal.ar.ataiin.shol.shory.cthorys.y.kor.sholdy!- <f1r.P1.1;F> fya!ys.ykal.ar.ytaiin.shol.shory.*k*!res.y!kor.sholdy!- <f1r.P1.1;N> fachys.ykal.ar.ataiin.shol.shory.cth!res.y,kor.sholdy!- <f1r.P1.1;U> fya!ys.ykal.ar.ytaiin.shol.shory.***!r*s.y.kor.sholdo*- # <f1r.P1.2;H> sory.ckhar.o!r.y.kair.chtaiin.shar.are.cthar.cthar.dan!- <f1r.P1.2;C> sory.ckhar.o.r.y.kain.shtaiin.shar.ar*.cthar.cthar.dan!- <f1r.P1.2;F> sory.ckhar.o!r!y.kair.chtaiin.shor.ar!.cthar.cthar.dana- <f1r.P1.2;N> sory.ckhar.o!r,y.kair.chtaiin.shar.are.cthar.cthar,dan!- <f1r.P1.2;U> sory.ckhar.o!r!y.kair.chtaiin.shor.ary.cthar.cthar.dan*-

The first data between “<” and “>” has information about the folio (page), line and author of the transcription. The transcription block above is the transcription for the first two lines of the first folio of the manuscript below:

As you can see, the EVA contains some code characters, like for instance “!”, “*” and they all have some meaning, like to inform that the author doing that translation is not sure about the character in that position, etc. EVA also contains transcription from different authors for the same line of the folio.

To convert this transcription to sentences I used only lines where the authors were sure about the entire line and I used the first line where the line satisfied this condition. I also did some cleaning on the transcription to remove the drawings names from the text, like: “text.text.text-{plant}text” -> “text text texttext”.

After this conversion from the EVA transcript to sentences compatible with the word2vec model, I trained the model to provide 100-dimensional word vectors for the words of the manuscript.

After training word vectors, I created a visualization of the 100-dimensional vectors into a 2D embedding space using t-SNE algorithm:

As you can see there are a lot of small clusters and there visually two big clusters, probably accounting for the two different languages used in the Codex (I still need to confirm this regarding the two languages aspect). After clustering it with DBSCAN (using the original word vectors, not the t-SNE transformed vectors), we can clearly see the two major clusters:

Now comes the really interesting and useful part of the word vectors, if use a star name from the folio below (it’s pretty obvious why it is know that this is probably a star name):

>>> w2v_model.most_similar("octhey")

[('qoekaiin', 0.6402825713157654),

('otcheody', 0.6389687061309814),

('ytchos', 0.566596269607544),

('ocphy', 0.5415685176849365),

('dolchedy', 0.5343093872070312),

('aiicthy', 0.5323750376701355),

('odchecthy', 0.5235849022865295),

('okeeos', 0.5187858939170837),

('cphocthy', 0.5159749388694763),

('oteor', 0.5050544738769531)]

I get really interesting similar words, like for instance the ocphy and other close star names:

It also returns the word “qoekaiin” from the folio 48, that precedes the same star name:

As you can see, word vectors are really useful to find some linguistic structures, we can also create another plot, showing how close are the star names in the 2D embedding space visualization created using t-SNE:

As you can see, we zoomed the major cluster of stars and we can see that they are really all grouped together in the vector space. These representations can be used for instance to infer plat names from the herbal section, etc.

My idea was to show how useful word vectors are to analyze unknown codex texts, I hope you liked and I hope that this could be somehow useful for other people how are also interested in this amazing manuscript.

– Christian S. Perone

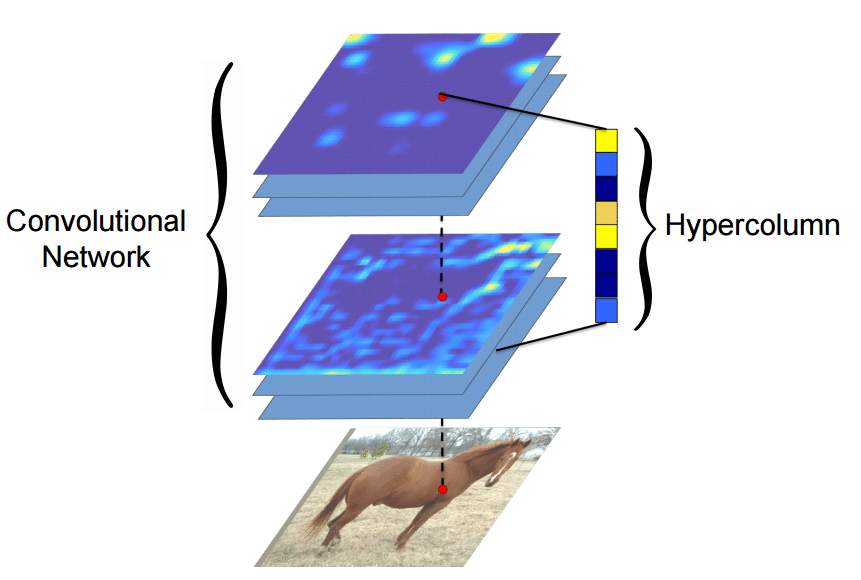

If you are following some Machine Learning news, you certainly saw the work done by Ryan Dahl on Automatic Colorization (Hacker News comments, Reddit comments). This amazing work uses pixel hypercolumn information extracted from the VGG-16 network in order to colorize images. Samim also used the network to process Black & White video frames and produced the amazing video below:

https://www.youtube.com/watch?v=_MJU8VK2PI4

Colorizing Black&White Movies with Neural Networks (video by Samim, network by Ryan)

But how does this hypercolumns works ? How to extract them to use on such variety of pixel classification problems ? The main idea of this post is to use the VGG-16 pre-trained network together with Keras and Scikit-Learn in order to extract the pixel hypercolumns and take a superficial look at the information present on it. I’m writing this because I haven’t found anything in Python to do that and this may be really useful for others working on pixel classification, segmentation, etc.

Many algorithms using features from CNNs (Convolutional Neural Networks) usually use the last FC (fully-connected) layer features in order to extract information about certain input. However, the information in the last FC layer may be too coarse spatially to allow precise localization (due to sequences of maxpooling, etc.), on the other side, the first layers may be spatially precise but will lack semantic information. To get the best of both worlds, the authors of the hypercolumn paper define the hypercolumn of a pixel as the vector of activations of all CNN units “above” that pixel.

The first step on the extraction of the hypercolumns is to feed the image into the CNN (Convolutional Neural Network) and extract the feature map activations for each location of the image. The tricky part is when the feature maps are smaller than the input image, for instance after a pooling operation, the authors of the paper then do a bilinear upsampling of the feature map in order to keep the feature maps on the same size of the input. There are also the issue with the FC (fully-connected) layers, because you can’t isolate units semantically tied only to one pixel of the image, so the FC activations are seen as 1×1 feature maps, which means that all locations shares the same information regarding the FC part of the hypercolumn. All these activations are then concatenated to create the hypercolumn. For instance, if we take the VGG-16 architecture to use only the first 2 convolutional layers after the max pooling operations, we will have a hypercolumn with the size of:

64 filters (first conv layer before pooling)

+

128 filters (second conv layer before pooling ) = 192 features

This means that each pixel of the image will have a 192-dimension hypercolumn vector. This hypercolumn is really interesting because it will contain information about the first layers (where we have a lot of spatial information but little semantic) and also information about the final layers (with little spatial information and lots of semantics). Thus this hypercolumn will certainly help in a lot of pixel classification tasks such as the one mentioned earlier of automatic colorization, because each location hypercolumn carries the information about what this pixel semantically and spatially represents. This is also very helpful on segmentation tasks (you can see more about that on the original paper introducing the hypercolumn concept).

Everything sounds cool, but how do we extract hypercolumns in practice ?

Before being able to extract the hypercolumns, we’ll setup the VGG-16 pre-trained network, because you know, the price of a good GPU (I can’t even imagine many of them) here in Brazil is very expensive and I don’t want to sell my kidney to buy a GPU.

To setup a pretrained VGG-16 network on Keras, you’ll need to download the weights file from here (vgg16_weights.h5 file with approximately 500MB) and then setup the architecture and load the downloaded weights using Keras (more information about the weights file and architecture here):

from matplotlib import pyplot as plt

import theano

import cv2

import numpy as np

import scipy as sp

from keras.models import Sequential

from keras.layers.core import Flatten, Dense, Dropout

from keras.layers.convolutional import Convolution2D, MaxPooling2D

from keras.layers.convolutional import ZeroPadding2D

from keras.optimizers import SGD

from sklearn.manifold import TSNE

from sklearn import manifold

from sklearn import cluster

from sklearn.preprocessing import StandardScaler

def VGG_16(weights_path=None):

model = Sequential()

model.add(ZeroPadding2D((1,1),input_shape=(3,224,224)))

model.add(Convolution2D(64, 3, 3, activation='relu'))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(64, 3, 3, activation='relu'))

model.add(MaxPooling2D((2,2), stride=(2,2)))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(128, 3, 3, activation='relu'))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(128, 3, 3, activation='relu'))

model.add(MaxPooling2D((2,2), stride=(2,2)))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(256, 3, 3, activation='relu'))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(256, 3, 3, activation='relu'))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(256, 3, 3, activation='relu'))

model.add(MaxPooling2D((2,2), stride=(2,2)))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(512, 3, 3, activation='relu'))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(512, 3, 3, activation='relu'))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(512, 3, 3, activation='relu'))

model.add(MaxPooling2D((2,2), stride=(2,2)))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(512, 3, 3, activation='relu'))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(512, 3, 3, activation='relu'))

model.add(ZeroPadding2D((1,1)))

model.add(Convolution2D(512, 3, 3, activation='relu'))

model.add(MaxPooling2D((2,2), stride=(2,2)))

model.add(Flatten())

model.add(Dense(4096, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(4096, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(1000, activation='softmax'))

if weights_path:

model.load_weights(weights_path)

return model

As you can see, this is a very simple code to declare the VGG16 architecture and load the pre-trained weights (together with Python imports for the required packages). After that we’ll compile the Keras model:

model = VGG_16('vgg16_weights.h5')

sgd = SGD(lr=0.1, decay=1e-6, momentum=0.9, nesterov=True)

model.compile(optimizer=sgd, loss='categorical_crossentropy')

Now let’s test the network using an image:

im_original = cv2.resize(cv2.imread('madruga.jpg'), (224, 224))

im = im_original.transpose((2,0,1))

im = np.expand_dims(im, axis=0)

im_converted = cv2.cvtColor(im_original, cv2.COLOR_BGR2RGB)

plt.imshow(im_converted)

Image used

As we can see, we loaded the image, fixed the axes and then we can now feed the image into the VGG-16 to get the predictions:

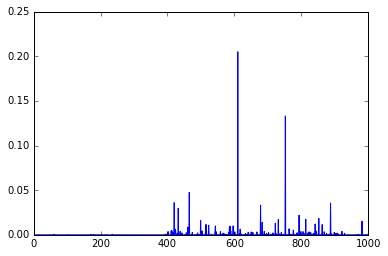

out = model.predict(im) plt.plot(out.ravel())

As you can see, these are the final activations of the softmax layer, the class with the “jersey, T-shirt, tee shirt” category.

Now, to extract the feature map activations, we’ll have to being able to extract feature maps from arbitrary convolutional layers of the network. We can do that by compiling a Theano function using the get_output() method of Keras, like in the example below:

get_feature = theano.function([model.layers[0].input], model.layers[3].get_output(train=False), allow_input_downcast=False) feat = get_feature(im) plt.imshow(feat[0][2])

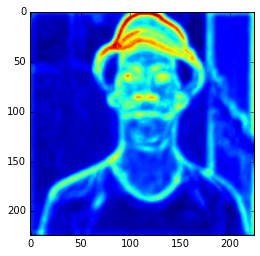

Feature Map

In the example above, I’m compiling a Theano function to get the 3 layer (a convolutional layer) feature map and then showing only the 3rd feature map. Here we can see the intensity of the activations. If we get feature maps of the activations from the final layers, we can see that the extracted features are more abstract, like eyes, etc. Look at this example below from the 15th convolutional layer:

get_feature = theano.function([model.layers[0].input], model.layers[15].get_output(train=False), allow_input_downcast=False) feat = get_feature(im) plt.imshow(feat[0][13])

More semantic feature maps.

As you can see, this second feature map is extracting more abstract features. And you can also note that the image seems to be more stretched when compared with the feature we saw earlier, that is because the the first feature maps has 224×224 size and this one has 56×56 due to the downscaling operations of the layers before the convolutional layer, and that is why we lose a lot of spatial information.

Now finally let’s extract the hypercolumns of arbitrary set of layers. To do that, we will define a function to extract these hypercolumns:

def extract_hypercolumn(model, layer_indexes, instance):

layers = [model.layers[li].get_output(train=False) for li in layer_indexes]

get_feature = theano.function([model.layers[0].input], layers,

allow_input_downcast=False)

feature_maps = get_feature(instance)

hypercolumns = []

for convmap in feature_maps:

for fmap in convmap[0]:

upscaled = sp.misc.imresize(fmap, size=(224, 224),

mode="F", interp='bilinear')

hypercolumns.append(upscaled)

return np.asarray(hypercolumns)

As we can see, this function will expect three parameters: the model itself, an list of layer indexes that will be used to extract the hypercolumn features and an image instance that will be used to extract the hypercolumns. Let’s now test the hypercolumn extraction for the first 2 convolutional layers:

layers_extract = [3, 8] hc = extract_hypercolumn(model, layers_extract, im)

That’s it, we extracted the hypercolumn vectors for each pixel. The shape of this “hc” variable is: (192L, 224L, 224L), which means that we have a 192-dimensional hypercolumn for each one of the 224×224 pixel (a total of 50176 pixels with 192 hypercolumn feature each).

Let’s plot the average of the hypercolumns activations for each pixel:

ave = np.average(hc.transpose(1, 2, 0), axis=2) plt.imshow(ave)

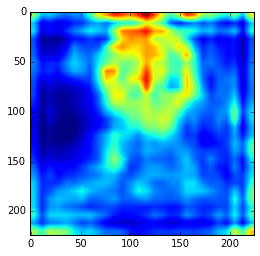

Ad you can see, those first hypercolumn activations are all looking like edge detectors, let’s see how these hypercolumns looks like for the layers 22 and 29:

layers_extract = [22, 29] hc = extract_hypercolumn(model, layers_extract, im) ave = np.average(hc.transpose(1, 2, 0), axis=2) plt.imshow(ave)

As we can see now, the features are really more abstract and semantically interesting but with spatial information a little fuzzy.

Remember that you can extract the hypercolumns using all the initial layers and also the final layers, including the FC layers. Here I’m extracting them separately to show how they differ in the visualization plots.

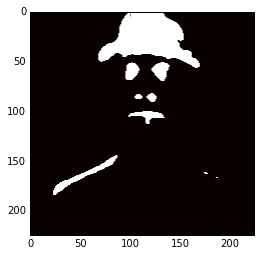

Now, you can do a lot of things, you can use these hypercolumns to classify pixels for some task, to do automatic pixel colorization, segmentation, etc. What I’m going to do here just as an experiment, is to use the hypercolumns (from the VGG-16 layers 3, 8, 15, 22, 29) and then cluster it using KMeans with 2 clusters:

m = hc.transpose(1,2,0).reshape(50176, -1) kmeans = cluster.KMeans(n_clusters=2, max_iter=300, n_jobs=5, precompute_distances=True) cluster_labels = kmeans .fit_predict(m) imcluster = np.zeros((224,224)) imcluster = imcluster.reshape((224*224,)) imcluster = cluster_labels plt.imshow(imcluster.reshape(224, 224), cmap="hot")

Now you can imagine how useful hypercolumns can be to tasks like keypoints extraction, segmentation, etc. It’s a very elegant, simple and useful concept.

I hope you liked it !

– Christian S. Perone

Just published this deck of slides of a presentation about Deep Learning and Convolutional Neural Networks.

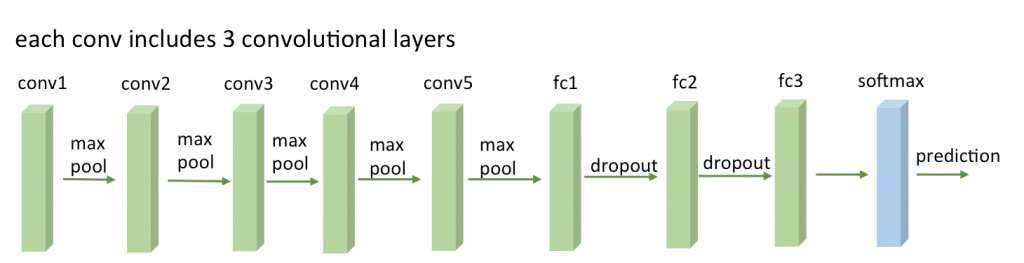

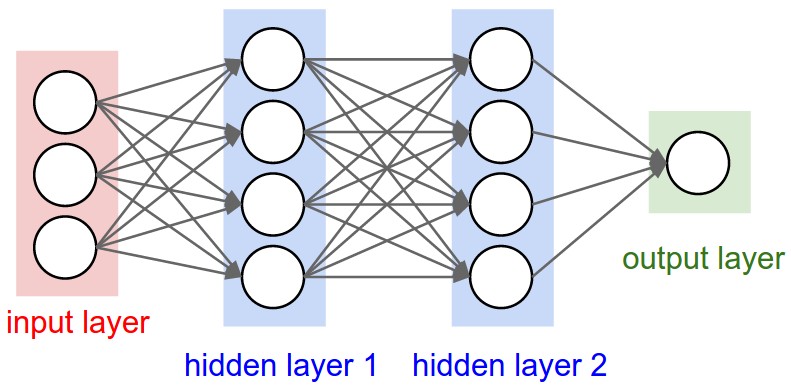

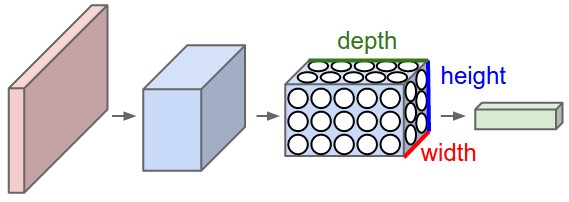

Convolutional neural networks (or ConvNets) are biologically-inspired variants of MLPs, they have different kinds of layers and each different layer works different than the usual MLP layers. If you are interested in learning more about ConvNets, a good course is the CS231n – Convolutional Neural Newtorks for Visual Recognition. The architecture of the CNNs are shown in the images below:

As you can see, the ConvNets works with 3D volumes and transformations of these 3D volumes. I won’t repeat in this post the entire CS231n tutorial, so if you’re really interested, please take time to read before continuing.

One of the Python packages for deep learning that I really like to work with is Lasagne and nolearn. Lasagne is based on Theano so the GPU speedups will really make a great difference, and their declarative approach for the neural networks creation are really helpful. The nolearn libary is a collection of utilities around neural networks packages (including Lasagne) that can help us a lot during the creation of the neural network architecture, inspection of the layers, etc.

What I’m going to show in this post, is how to build a simple ConvNet architecture with some convolutional and pooling layers. I’m also going to show how you can use a ConvNet to train a feature extractor and then use it to extract features before feeding them into different models like SVM, Logistic Regression, etc. Many people use pre-trained ConvNet models and then remove the last output layer to extract the features from ConvNets that were trained on ImageNet datasets. This is usually called transfer learning because you can use layers from other ConvNets as feature extractors for different problems, since the first layer filters of the ConvNets works as edge detectors, they can be used as general feature detectors for other problems.

The MNIST dataset is one of the most traditional datasets for digits classification. We will use a pickled version of it for Python, but first, lets import the packages that we will need to use:

import matplotlib import matplotlib.pyplot as plt import matplotlib.cm as cm from urllib import urlretrieve import cPickle as pickle import os import gzip import numpy as np import theano import lasagne from lasagne import layers from lasagne.updates import nesterov_momentum from nolearn.lasagne import NeuralNet from nolearn.lasagne import visualize from sklearn.metrics import classification_report from sklearn.metrics import confusion_matrix

As you can see, we are importing matplotlib for plotting some images, some native Python modules to download the MNIST dataset, numpy, theano, lasagne, nolearn and some scikit-learn functions for model evaluation.

After that, we define our MNIST loading function (this is pretty the same function used in the Lasagne tutorial):

def load_dataset():

url = 'http://deeplearning.net/data/mnist/mnist.pkl.gz'

filename = 'mnist.pkl.gz'

if not os.path.exists(filename):

print("Downloading MNIST dataset...")

urlretrieve(url, filename)

with gzip.open(filename, 'rb') as f:

data = pickle.load(f)

X_train, y_train = data[0]

X_val, y_val = data[1]

X_test, y_test = data[2]

X_train = X_train.reshape((-1, 1, 28, 28))

X_val = X_val.reshape((-1, 1, 28, 28))

X_test = X_test.reshape((-1, 1, 28, 28))

y_train = y_train.astype(np.uint8)

y_val = y_val.astype(np.uint8)

y_test = y_test.astype(np.uint8)

return X_train, y_train, X_val, y_val, X_test, y_test

As you can see, we are downloading the MNIST pickled dataset and then unpacking it into the three different datasets: train, validation and test. After that we reshape the image contents to prepare them to input into the Lasagne input layer later and we also convert the numpy array types to uint8 due to the GPU/theano datatype restrictions.

After that, we’re ready to load the MNIST dataset and inspect it:

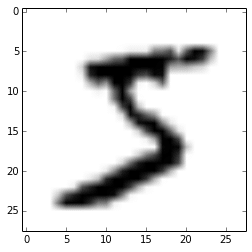

X_train, y_train, X_val, y_val, X_test, y_test = load_dataset() plt.imshow(X_train[0][0], cmap=cm.binary)

This code above will output the following image (I’m using IPython Notebook):

Now we can define our ConvNet architecture and then train it using a GPU/CPU (I have a very cheap GPU, but it helps a lot):

net1 = NeuralNet(

layers=[('input', layers.InputLayer),

('conv2d1', layers.Conv2DLayer),

('maxpool1', layers.MaxPool2DLayer),

('conv2d2', layers.Conv2DLayer),

('maxpool2', layers.MaxPool2DLayer),

('dropout1', layers.DropoutLayer),

('dense', layers.DenseLayer),

('dropout2', layers.DropoutLayer),

('output', layers.DenseLayer),

],

# input layer

input_shape=(None, 1, 28, 28),

# layer conv2d1

conv2d1_num_filters=32,

conv2d1_filter_size=(5, 5),

conv2d1_nonlinearity=lasagne.nonlinearities.rectify,

conv2d1_W=lasagne.init.GlorotUniform(),

# layer maxpool1

maxpool1_pool_size=(2, 2),

# layer conv2d2

conv2d2_num_filters=32,

conv2d2_filter_size=(5, 5),

conv2d2_nonlinearity=lasagne.nonlinearities.rectify,

# layer maxpool2

maxpool2_pool_size=(2, 2),

# dropout1

dropout1_p=0.5,

# dense

dense_num_units=256,

dense_nonlinearity=lasagne.nonlinearities.rectify,

# dropout2

dropout2_p=0.5,

# output

output_nonlinearity=lasagne.nonlinearities.softmax,

output_num_units=10,

# optimization method params

update=nesterov_momentum,

update_learning_rate=0.01,

update_momentum=0.9,

max_epochs=10,

verbose=1,

)

# Train the network

nn = net1.fit(X_train, y_train)

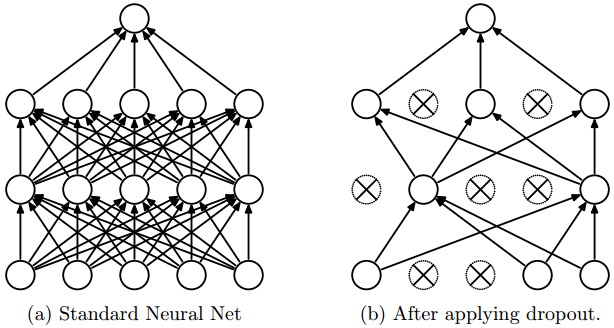

As you can see, in the parameter layers we’re defining a dictionary of tuples with the layer names/types and then we define the parameters for these layers. Our architecture here is using two convolutional layers with poolings and then a fully connected layer (dense layer) and the output layer. There are also dropouts between some layers, the dropout layer is a regularizer that randomly sets input values to zero to avoid overfitting (see the image below).

After calling the train method, the nolearn package will show status of the learning process, in my machine with my humble GPU I got the results below:

# Neural Network with 160362 learnable parameters

## Layer information

# name size

--- -------- --------

0 input 1x28x28

1 conv2d1 32x24x24

2 maxpool1 32x12x12

3 conv2d2 32x8x8

4 maxpool2 32x4x4

5 dropout1 32x4x4

6 dense 256

7 dropout2 256

8 output 10

epoch train loss valid loss train/val valid acc dur

------- ------------ ------------ ----------- --------- ---

1 0.85204 0.16707 5.09977 0.95174 33.71s

2 0.27571 0.10732 2.56896 0.96825 33.34s

3 0.20262 0.08567 2.36524 0.97488 33.51s

4 0.16551 0.07695 2.15081 0.97705 33.50s

5 0.14173 0.06803 2.08322 0.98061 34.38s

6 0.12519 0.06067 2.06352 0.98239 34.02s

7 0.11077 0.05532 2.00254 0.98427 33.78s

8 0.10497 0.05771 1.81898 0.98248 34.17s

9 0.09881 0.05159 1.91509 0.98407 33.80s

10 0.09264 0.04958 1.86864 0.98526 33.40s

As you can see, the accuracy in the end was 0.98526, a pretty good performance for a 10 epochs training.

Now we can use the model to predict the entire testing dataset:

preds = net1.predict(X_test)

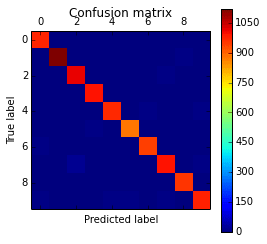

And we can also plot a confusion matrix to check the performance of the neural network classification:

cm = confusion_matrix(y_test, preds)

plt.matshow(cm)

plt.title('Confusion matrix')

plt.colorbar()

plt.ylabel('True label')

plt.xlabel('Predicted label')

plt.show()

The code above will plot the following confusion matrix:

As you can see, the diagonal is where the classification is more dense, showing the good performance of our classifier.

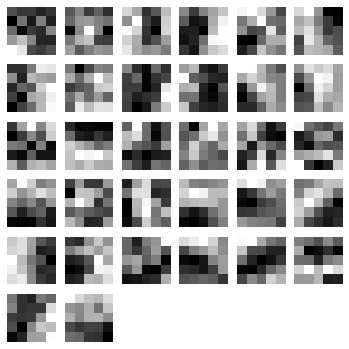

We can also visualize the 32 filters from the first convolutional layer:

visualize.plot_conv_weights(net1.layers_['conv2d1'])

The code above will plot the following filters below:

As you can see, the nolearn plot_conv_weights plots all the filters present in the layer we specified.

Now it is time to create theano-compiled functions that will feed-forward the input data into the architecture up to the layer you’re interested. I’m going to get the functions for the output layer and also for the dense layer before the output layer:

dense_layer = layers.get_output(net1.layers_['dense'], deterministic=True) output_layer = layers.get_output(net1.layers_['output'], deterministic=True) input_var = net1.layers_['input'].input_var f_output = theano.function([input_var], output_layer) f_dense = theano.function([input_var], dense_layer)

As you can see, we have now two theano functions called f_output and f_dense (for the output and dense layers). Please note that in order to get the layers here we are using a extra parameter called “deterministic“, this is to avoid the dropout layers affecting our feed-forward pass.

We can now convert an example instance to the input format and then feed it into the theano function for the output layer:

instance = X_test[0][None, :, :] %timeit -n 500 f_output(instance) 500 loops, best of 3: 858 µs per loop

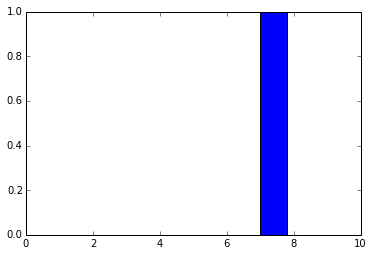

As you can see, the f_output function takes an average of 858 µs. We can also plot the output layer activations for the instance:

pred = f_output(instance) N = pred.shape[1] plt.bar(range(N), pred.ravel())

The code above will create the following plot:

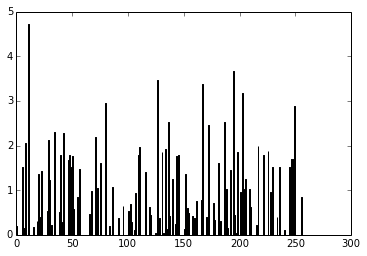

As you can see, the digit was recognized as the digit 7. The fact that you can create theano functions for any layer of the network is very useful because you can create a function (like we did before) to get the activations for the dense layer (the one before the output layer) and you can use these activations as features and use your neural network not as classifier but as a feature extractor. Let’s plot now the 256 unit activations for the dense layer:

pred = f_dense(instance) N = pred.shape[1] plt.bar(range(N), pred.ravel())

The code above will create the following plot below:

You can now use the output of the these 256 activations as features on a linear classifier like Logistic Regression or SVM.

I hope you enjoyed the tutorial !

Update – 05 Dec 2017: Google just announced that it will be commited to the development of a new released version of the S2 library, amazing news, repository can be found here.

Google’s S2 library is a real treasure, not only due to its capabilities for spatial indexing but also because it is a library that was released more than 4 years ago and it didn’t get the attention it deserved. The S2 library is used by Google itself on Google Maps, MongoDB engine and also by Foursquare, but you’re not going to find any documentation or articles about the library anywhere except for a paper by Foursquare, a Google presentation and the source code comments. You’ll also struggle to find bindings for the library, the official repository has missing Swig files for the Python library and thanks to some forks we can have a partial binding for the Python language (I’m going to it use for this post). I heard that Google is actively working on the library right now and we are probably soon going to get more details about it when they release this work, but I decided to share some examples about the library and the reasons why I think that this library is so cool.

You’ll see this “cell” concept all around the S2 code. The cells are an hierarchical decomposition of the sphere (the Earth on our case, but you’re not limited to it) into compact representations of regions or points. Regions can also be approximated using these same cells, that have some nice features:

The S2 library starts by projecting the points/regions of the sphere into a cube, and each face of the cube has a quad-tree where the sphere point is projected into. After that, some transformation occurs (for more details on why, see the Google presentation) and the space is discretized, after that the cells are enumerated on a Hilbert Curve, and this is why this library is so nice, the Hilbert curve is a space-filling curve that converts multiple dimensions into one dimension that has an special spatial feature: it preserves the locality.

The Hilbert curve is space-filling curve, which means that its range covers the entire n-dimensional space. To understand how this works, you can imagine a long string that is arranged on the space in a special way such that the string passes through each square of the space, thus filling the entire space. To convert a 2D point along to the Hilbert curve, you just need select the point on the string where the point is located. An easy way to understand it is to use this iterative example where you can click on any point of the curve and it will show where in the string the point is located and vice-versa.

In the image below, the point in the very beggining of the Hilbert curve (the string) is located also in the very beginning along curve (the curve is represented by a long string in the bottom of the image):

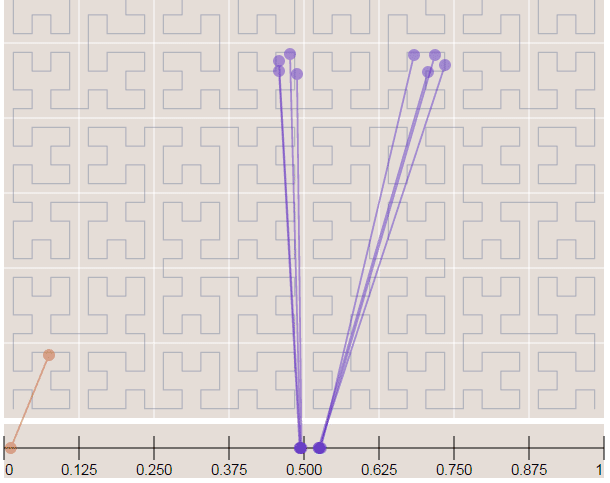

Now in the image below where we have more points, it is easy to see how the Hilbert curve is preserving the spatial locality. You can note that points closer to each other in the curve (in the 1D representation, the line in the bottom) are also closer in the 2D dimensional space (in the x,y plane). However, note that the opposite isn’t quite true because you can have 2D points that are close to each other in the x,y plane that aren’t close in the Hilbert curve.

Since S2 uses the Hilbert Curve to enumerate the cells, this means that cell values close in value are also spatially close to each other. When this idea is combined with the hierarchical decomposition, you have a very fast framework for indexing and for query operations. Before we start with the pratical examples, let’s see how the cells are represented in 64-bit integers.

If you are interested in Hilbert Curves, I really recommend this article, it is very intuitive and show some properties of the curve.

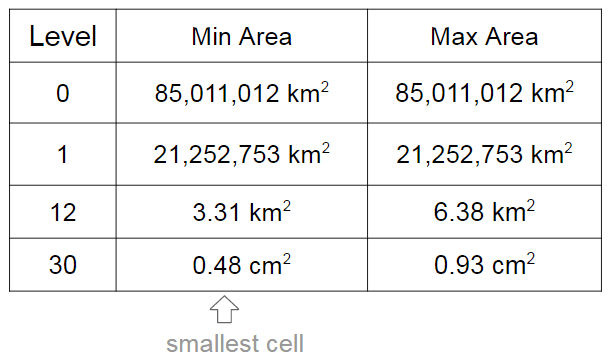

As I already mentioned, the cells have different levels and different regions that they can cover. In the S2 library you’ll find 30 levels of hierachical decomposition. The cell level and the area range that they can cover is shown in the Google presentation in the slide that I’m reproducing below:

As you can see, a very cool result of the S2 geometry is that every cm² of the earth can be represented using a 64-bit integer.

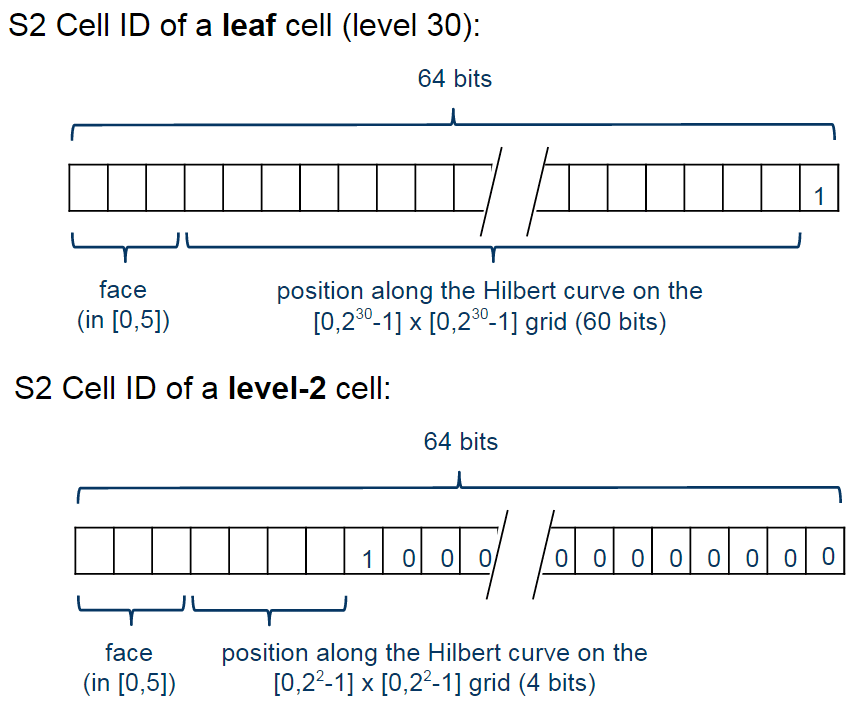

The cells are represented using the following schema:

The first one is representing a leaf cell, a cell with the minimum area usually used to represent points. As you can see, the 3 initial bits are reserved to store the face of the cube where the point of the sphere was projected, then it is followed by the position of the cell in the Hilbert curve always followed by a “1” bit that is a marker that will identify the level of the cell.

So, to check the level of the cell, all that is required is to check where the last “1” bit is located in the cell representation. The checking of containment, to verify if a cell is contained in another cell, all you just have to do is to do a prefix comparison. These operations are really fast and they are possible only due to the Hilbert Curve enumeration and the hierarchical decomposition method used.

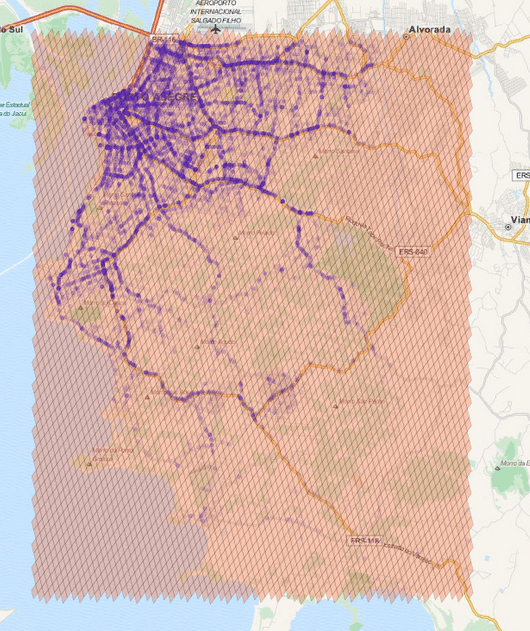

So, if you want to generate cells to cover a region, you can use a method of the library where you specify the maximum number of the cells, the maximum cell level and the minimum cell level to be used and an algorithm will then approximate this region using the specified parameters. In the example below, I’m using the S2 library to extract some Machine Learning binary features using level 15 cells:

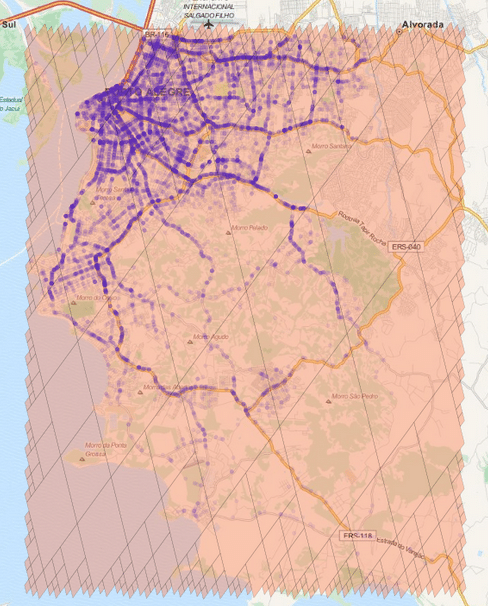

The cells regions are here represented in the image above using transparent polygons over the entire region of interest of my city. Since I used the level 15 both for minimum and maximum level, the cells are all covering similar region areas. If I change the minimum level to 8 (thus allowing the possibility of using larger cells), the algorithm will approximate the region in a way that it will provide the smaller number of cells and also trying to keep the approximation precise like in the example below:

As you can see, we have now a covering using larger cells in the center and to cope with the borders we have an approximation using smaller cells (also note the quad-trees).

* In this tutorial I used the Python 2.7 bindings from the following repository. The instructions to compile and install it are present in the readme of the repository so I won’t repeat it here.

The first step to convert Latitude/Longitude points to the cell representation are shown below:

>>> import s2 >>> latlng = s2.S2LatLng.FromDegrees(-30.043800, -51.140220) >>> cell = s2.S2CellId.FromLatLng(latlng) >>> cell.level() 30 >>> cell.id() 10743750136202470315 >>> cell.ToToken() 951977d377e723ab

As you can see, we first create an object of the class S2LatLng to represent the lat/lng point and then we feed it into the S2CellId class to build the cell representation. After that, we can get the level and id of the class. There is also a method called ToToken that converts the integer representation to a compact alphanumerical representation that you can parse it later using FromToken method.

You can also get the parent cell of that cell (one level above it) and use containment methods to check if a cell is contained by another cell:

>>> parent = cell.parent() >>> print parent.level() 29 >>> parent.id() 10743750136202470316 >>> parent.ToToken() 951977d377e723ac >>> cell.contains(parent) False >>> parent.contains(cell) True

As you can see, the level of the parent is one above the children cell (in our case, a leaf cell). The ids are also very similar except for the level of the cell and the containment checking is really fast (it is only checking the range of the children cells of the parent cell).

These cells can be stored on a database and they will perform quite well on a BTree index. In order to create a collection of cells that will cover a region, you can use the S2RegionCoverer class like in the example below:

>>> region_rect = S2LatLngRect(

S2LatLng.FromDegrees(-51.264871, -30.241701),

S2LatLng.FromDegrees(-51.04618, -30.000003))

>>> coverer = S2RegionCoverer()

>>> coverer.set_min_level(8)

>>> coverer.set_max_level(15)

>>> coverer.set_max_cells(500)

>>> covering = coverer.GetCovering(region_rect)

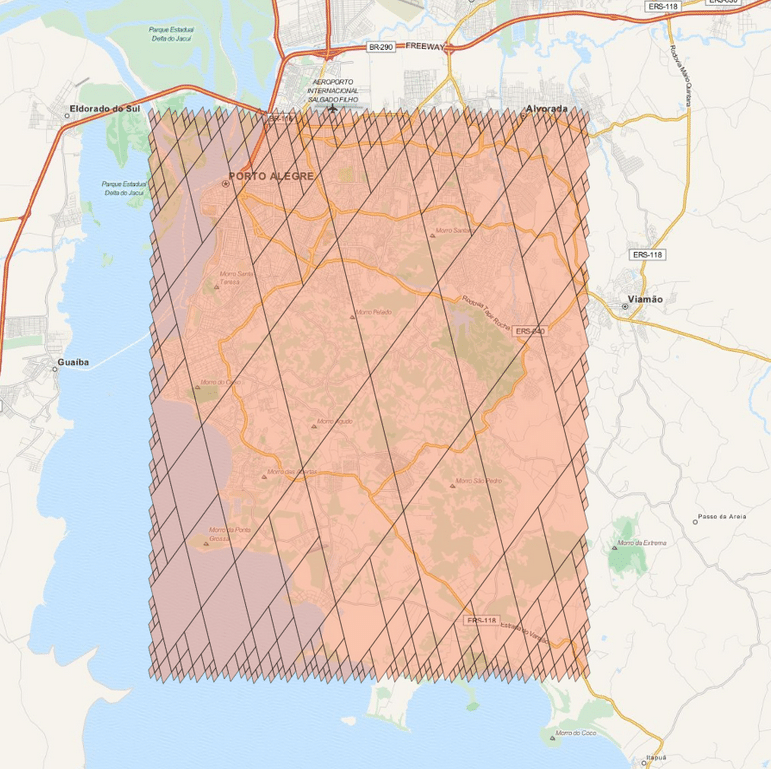

First of all, we defined a S2LatLngRect which is a rectangle delimiting the region that we want to cover. There are also other classes that you can use (to build polygons for instance), the S2RegionCoverer works with classes that uses the S2Region class as base class. After defining the rectangle, we instantiate the S2RegionCoverer and then set the aforementioned min/max levels and the max number of the cells that we want the approximation to generate.

If you wish to plot the covering, you can use Cartopy, Shapely and matplotlib, like in the example below:

import matplotlib.pyplot as plt

from s2 import *

from shapely.geometry import Polygon

import cartopy.crs as ccrs

import cartopy.io.img_tiles as cimgt

proj = cimgt.MapQuestOSM()

plt.figure(figsize=(20,20), dpi=200)

ax = plt.axes(projection=proj.crs)

ax.add_image(proj, 12)

ax.set_extent([-51.411886, -50.922470,

-30.301314, -29.94364])

region_rect = S2LatLngRect(

S2LatLng.FromDegrees(-51.264871, -30.241701),

S2LatLng.FromDegrees(-51.04618, -30.000003))

coverer = S2RegionCoverer()

coverer.set_min_level(8)

coverer.set_max_level(15)

coverer.set_max_cells(500)

covering = coverer.GetCovering(region_rect)

geoms = []

for cellid in covering:

new_cell = S2Cell(cellid)

vertices = []

for i in xrange(0, 4):

vertex = new_cell.GetVertex(i)

latlng = S2LatLng(vertex)

vertices.append((latlng.lat().degrees(),

latlng.lng().degrees()))

geo = Polygon(vertices)

geoms.append(geo)

print "Total Geometries: {}".format(len(geoms))

ax.add_geometries(geoms, ccrs.PlateCarree(), facecolor='coral',

edgecolor='black', alpha=0.4)

plt.show()

And the result will be the one below:

There are a lot of stuff in the S2 API, and I really recommend you to explore and read the source-code, it is really helpful. The S2 cells can be used for indexing and in key-value databases, it can be used on B Trees with really good efficiency and also even for Machine Learning purposes (which is my case), anyway, it is a very useful tool that you should keep in your toolbox. I hope you enjoyed this little tutorial !

– Christian S. Perone

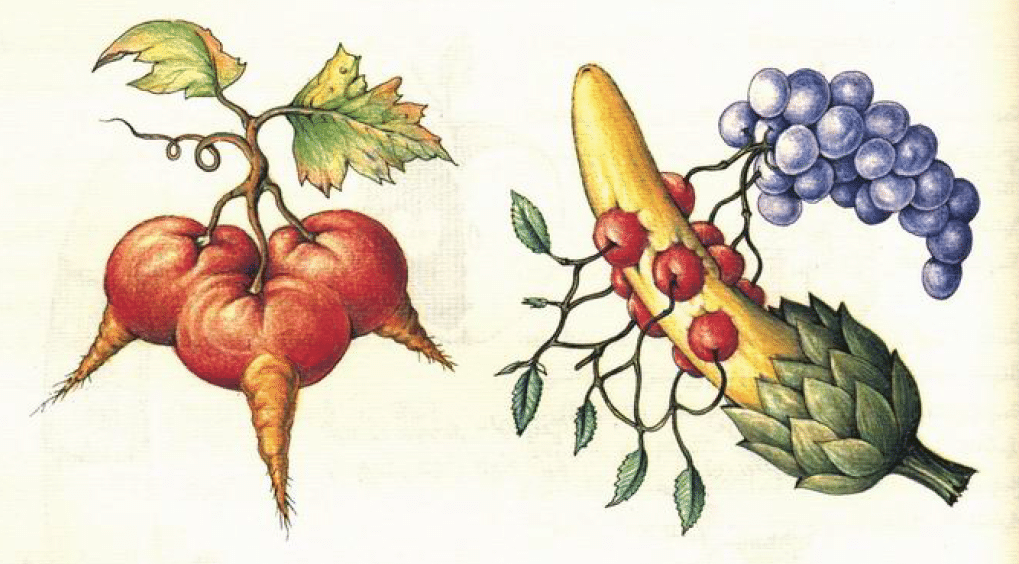

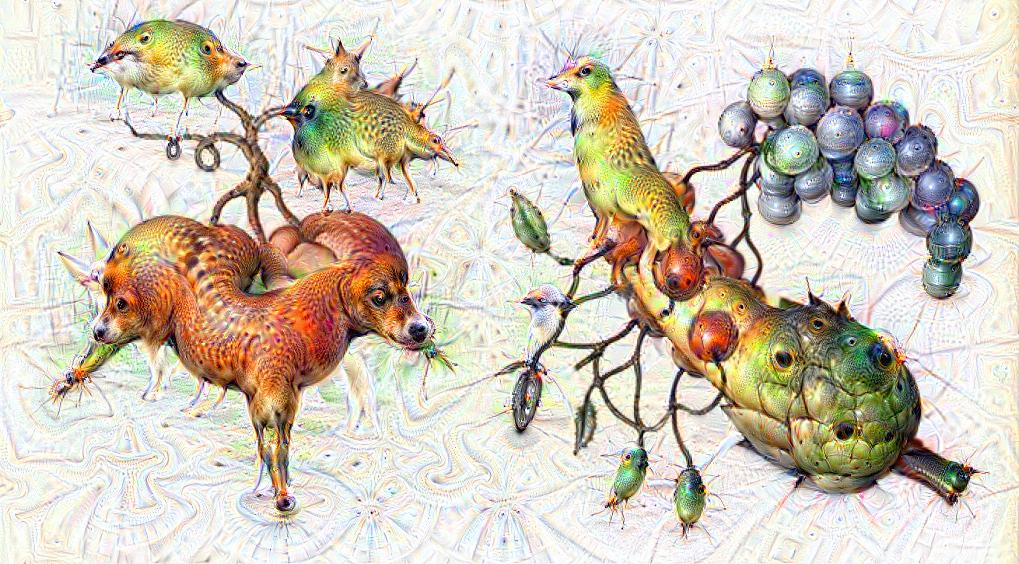

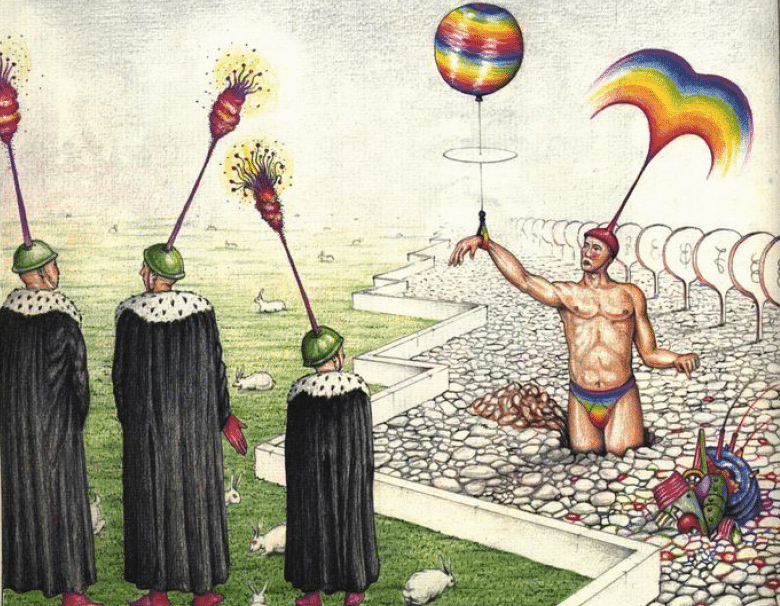

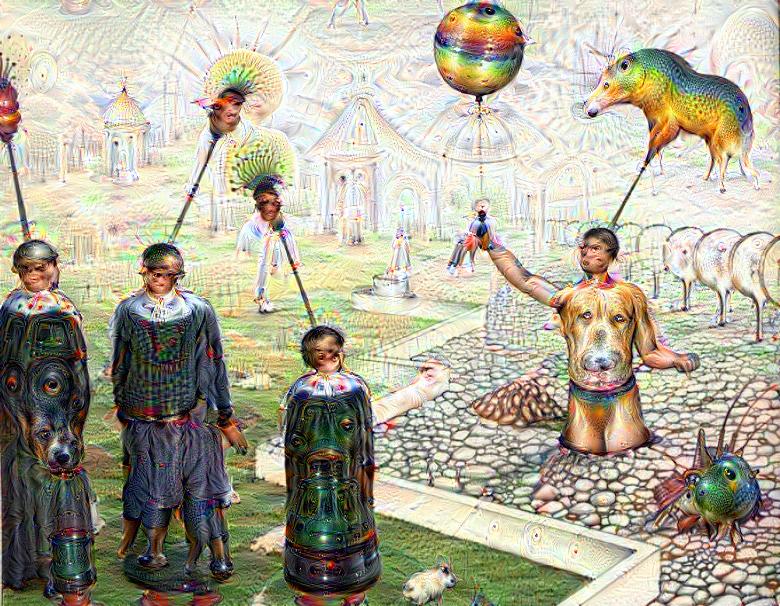

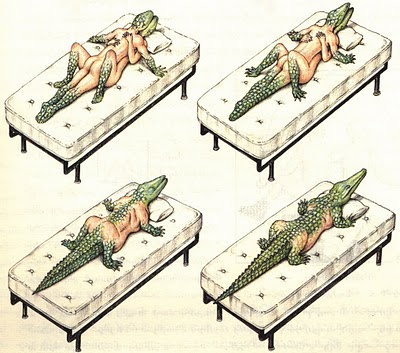

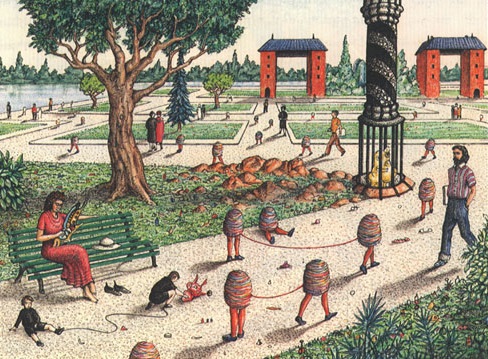

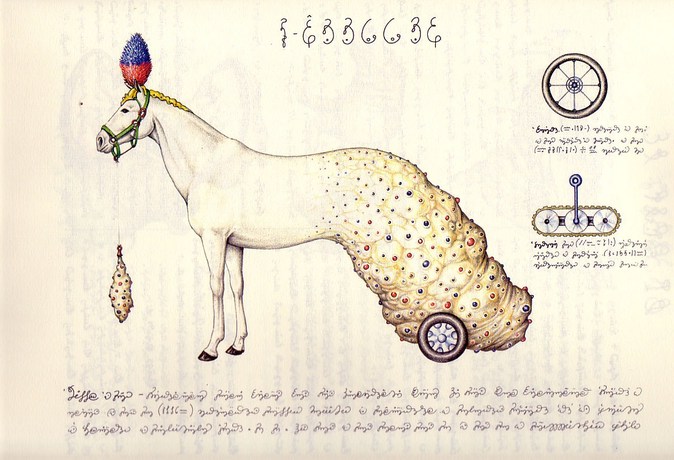

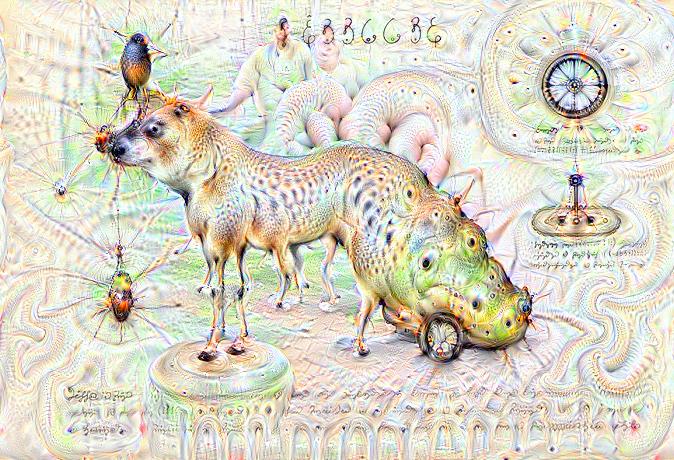

Some time ago I received the Luigi’s Codex Seraphinianus book, for those who still didn’t had a chance to take a look, I really recommend you to buy the book, this is the kind of book that will certainly leave a weirdness in your senses. Codex looks like a treatise of a obscure world, and the Codex alone is by far the most weirdest book I’ve ever seen, so I decided to use the GoogleNet model to create the inceptionisms (using the code based on Caffe, that Google kindly released) with some selected images from the Codex. The result of this process created some very interesting images that I decided to share below (click to enlarge):

I hope you liked it !

– Christian S. Perone

After flying this past weekend (together with Gabriel and Leandro) with Gabriel’s drone (which is an handmade APM 2.6 based quadcopter) in our town (Porto Alegre, Brasil), I decided to implement a tracking for objects using OpenCV and Python and check how the results would be using simple and fast methods like Meanshift. The result was very impressive and I believe that there is plenty of room for optimization, but the algorithm is now able to run in real time using Python with good results and with a Full HD resolution of 1920×1080 and 30 fps.

Here is the video of the flight that was piloted by Gabriel:

See it in Full HD for more details.

The algorithm can be described as follows and it is very simple (less than 50 lines of Python) and straightforward:

The entire code for the tracking is described below:

import numpy as np

import cv2

def run_main():

cap = cv2.VideoCapture('upabove.mp4')

# Read the first frame of the video

ret, frame = cap.read()

# Set the ROI (Region of Interest). Actually, this is a

# rectangle of the building that we're tracking

c,r,w,h = 900,650,70,70

track_window = (c,r,w,h)

# Create mask and normalized histogram

roi = frame[r:r+h, c:c+w]

hsv_roi = cv2.cvtColor(roi, cv2.COLOR_BGR2HSV)

mask = cv2.inRange(hsv_roi, np.array((0., 30.,32.)), np.array((180.,255.,255.)))

roi_hist = cv2.calcHist([hsv_roi], [0], mask, [180], [0, 180])

cv2.normalize(roi_hist, roi_hist, 0, 255, cv2.NORM_MINMAX)

term_crit = (cv2.TERM_CRITERIA_EPS | cv2.TERM_CRITERIA_COUNT, 80, 1)

while True:

ret, frame = cap.read()

hsv = cv2.cvtColor(frame, cv2.COLOR_BGR2HSV)

dst = cv2.calcBackProject([hsv], [0], roi_hist, [0,180], 1)

ret, track_window = cv2.meanShift(dst, track_window, term_crit)

x,y,w,h = track_window

cv2.rectangle(frame, (x,y), (x+w,y+h), 255, 2)

cv2.putText(frame, 'Tracked', (x-25,y-10), cv2.FONT_HERSHEY_SIMPLEX,

1, (255,255,255), 2, cv2.CV_AA)

cv2.imshow('Tracking', frame)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

cv2.destroyAllWindows()

if __name__ == "__main__":

run_main()

I hope you liked it !